How to use Terraform or OpenTofu to Manage Datadog

A simple guide to using Datadog and Terraform

Datadog, a leading monitoring and analytics platform, helps teams implement monitoring for various aspects of their organization whether it is cloud infrastructure, application performance monitoring, or general incident management. Leveraging Infrastructure as Code (IaC) practices with tools like Terraform or OpenTofu makes the management of Datadog easier to scale.

The Datadog Terraform Provider seamlessly integrates Datadog's monitoring capabilities into your infrastructure provisioning process, allowing you to define and manage monitoring resources alongside your infrastructure code. In this blog, we'll explore how to leverage the Datadog Terraform Provider to streamline your monitoring setup as well as the integrations that Scalr has with Datadog to assist in monitoring your Terraform operations.

Why Use the Datadog Terraform Provider?

Before diving into the implementation details, let's briefly highlight the benefits of using the Datadog Terraform Provider:

- Infrastructure as Code (IaC): By defining monitoring resources in Terraform configuration files, you treat monitoring infrastructure as part of your overall infrastructure codebase. This promotes consistency, reproducibility, and version control.

- Simplified Workflow: With Terraform, you can automate the provisioning, updating, and deletion of monitoring resources, reducing manual intervention and potential errors.

- Scalability: As your infrastructure grows, managing monitoring resources manually becomes cumbersome. Terraform's declarative syntax allows you to scale your monitoring setup effortlessly.

- Integration: Datadog's Terraform Provider seamlessly integrates with other Terraform-supported providers, enabling you to incorporate monitoring into your existing infrastructure provisioning workflows, like an AWS account.

Getting Started

To start using the Datadog Terraform or OpenTofu Provider, follow these steps:

- Install Terraform or OpenTofu: If you haven't already, download and install Terraform from the official website ( https://www.terraform.io/ or https://opentofu.org/ ).

- A Datadog account, which can be created here: https://www.datadoghq.com/

- Configure your Datadog API Key: Obtain your Datadog API key from the Datadog dashboard. This key will be used by Terraform to authenticate with Datadog.

- Configure Datadog App Key: Obtain your Datadog App key from the Datadog dashboard. This key will be used by Terraform to authenticate with Datadog.

Using the Datadog Provider: Examples

Define Required Providers and Provider Configuration

Start by configuring the Datadog Terraform provider in your Terraform code. Open your Terraform configuration file (commonly named main.tf) and add the following block:

Replace "your-datadog-api-key" with your Datadog API key and "your-datadog-app-key" with the application keys you generated earlier. The latest version of the official Datadog Terraform documentation can be found in the registry here.

Example: Create a Datadog Monitor

Here's a simple example demonstrating how to create a basic monitor using the Datadog Terraform Provider:

In this example, we define a monitor that triggers when the 5-minute load average on a host named "host0" exceeds 2.0.

Example: Create Downtime

Here's a simple example demonstrating how to set downtime in Datadog using the Datadog Terraform Provider:

Example: Pull a Monitor Datasource

In this example, we'll use the datadog_monitor datasource to pull details about the monitor that was created in a previous step:

This will return details about the monitor such as the message that the monitor has set as well as the thresholds.

Execute Terraform or Tofu Commands

Once you have created your Terraform or Tofu code for the Datadog resources or datasources, you can then execute it with the following commands:

For Terraform:

For OpenTofu:

Terraform or Tofu will initialize the Datadog provider and apply the changes to your Datadog environment. Upon a successful Terraform apply, the state file will be created.

Best Practices and Advanced Usage

Variables and Dynamic Configurations

Utilize Terraform variables to make your configurations more dynamic. Instead of hardcoding values, use variables to create reusable and flexible scripts.

These variables can be defined as environment variables to make it more dynamic and avoid having secrets in the code.

For example, you can specify the following before running the Terraform init to set the Datadog API key:

export TF_VAR_api-key=<your-api-key>

Remote State Management

Consider using remote state management to store your Terraform state files securely. Services like Scalr or AWS S3 can be configured as remote backends to store state files. Here is an example of connecting to Scalr:

Find out more about remote state management here.

Provider Summary

This guide covered the basic steps to configure the Terraform Datadog provider. As you explore further, consider exploring additional Datadog resources supported by the Datadog provider, such as roles, logs, and various integrations with clouds like AWS. The library.tf documentation for the Terraform Datadog provider is a valuable resource for in-depth information and examples.

By integrating Datadog into your Terraform workflows, you're not just managing infrastructure – you're managing monitoring with the efficiency and scalability that infrastructure as code brings.

Scalr's Integration with Datadog

Scalr, a Terraform Automation and Collaboration platform, provides a best-in-class integration with Datadog. Scalr is featured in the Datadog catalog, making it easier than ever to integrate the two products. Below, we'll explore the seamless integration process and benefits of integrating Scalr with Datadog.

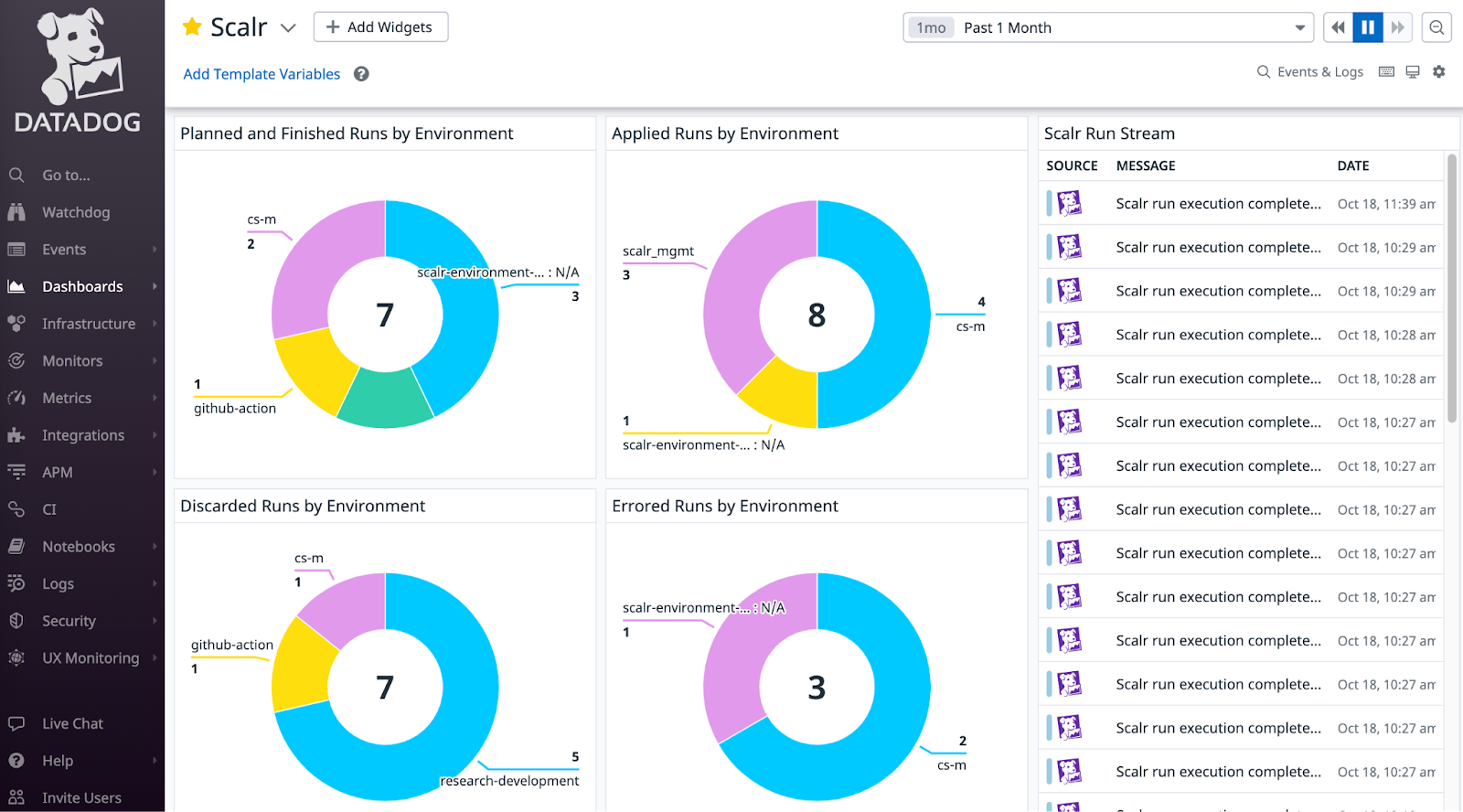

Events

The Scalr to Datadog integration for events can stream event details for Terraform and OpenTofu runs executed in Scalr. Datadog users can build reports based on the source of the run, whether it was from the Terraform CLI, a VCS provider like GitHub, or manually executed through the UI. Users can also track the result of the run, the execution time, and much more.

The events are sent through the Datadog API integration, see the official documentation here.

Metrics

Scalr will send metrics to Datadog for in-depth analysis and reporting such as queued runs, queue state, the number of environments, and workspace count. These metrics are visualized in their out-of-the-box dashboard to help correlate deployments with other infrastructure changes and to track trends within your pipeline. The metrics functionality is an agent-based integration, which means you must use the Datadog agent. See how to install the Datadog agent and enable the Scalr integration here.

Audit Logs

Scalr sends all of its audit logs to Datadog for further analysis. Audit logs allow you to get insights into all actions taken, who performed the action, how it was done, and more. The audit log feature can use the same Datadog connection that is used for events or a new one can be created.

For example, you may want to know how and when a Terraform run was discarded in the Scalr pipeline. Scalr will send the following data to Datadog:

Summary

Datadog provides many capabilities to take your Terraform or OpenTofu operations to the next level. Whether you are using the Datadog provider to manage Datadog itself or using the Scalr integration with Datadog to monitor your Terraform and Tofu operations, Datadog is at the forefront of helping users scale.

For further reading, see here.

Advanced Usage: Dashboards, SLOs, and Resource Mapping

Defining a Dashboard

You can define entire dashboards in code. A common challenge, however, is dynamically generating widgets. The standard datadog_dashboard resource is not well-suited for this.

The solution is to use the datadog_dashboard_json resource, which accepts a JSON string. This allows you to use Terraform's for expressions to programmatically generate your widget definitions.

# variables.tf

variable "widget_configurations" {

type = map(object({

title = string

query = string

}))

default = {

"cpu_usage" = {

title = "CPU Usage",

query = "avg:system.cpu.user{*}"

},

"memory_usage" = {

title = "Memory Usage",

query = "avg:system.mem.used{*}"

}

}

}

# main.tf

resource "datadog_dashboard_json" "programmatic_dashboard" {

dashboard = jsonencode({

title = "Programmatically Generated Dashboard"

layout_type = "ordered"

widgets = [

for key, config in var.widget_configurations : {

definition = {

type = "timeseries"

title = config.title

requests = [

{

q = config.query

}

]

}

}

]

})

}Defining a Service Level Objective (SLO)

Codifying SLOs is a cornerstone of modern SRE. This example defines a 99.9% availability SLO based on the ratio of successful API requests to total requests.

resource "datadog_service_level_objective" "api_availability" {

name = "API Request Availability"

type = "metric"

description = "99.9% of all API requests should be successful (non-5xx)."

query {

numerator = "sum:trace.http.request.hits{env:prod,service:core-api,!status_code:5xx}.as_count()"

denominator = "sum:trace.http.request.hits{env:prod,service:core-api}.as_count()"

}

thresholds {

target = 99.9

timeframe = "30d"

warning = 99.95

}

tags = ["service:core-api", "env:prod", "slo:availability"]

}Scaling Your Datadog Terraform Configuration

As your usage grows, managing a single, monolithic Terraform configuration becomes a bottleneck. To manage Datadog at scale, consider these additional best practices:

- Use Modules: Encapsulate common patterns into reusable modules. For example, create a "standard service" module that bundles monitors for latency, error rate, and saturation. This promotes consistency and reduces code duplication.

- Separate Environments: Use a directory-based structure to maintain separate state files for each environment (e.g.,

dev,staging,prod). This provides strong isolation and prevents changes in one environment from impacting another. - Split Your State: The biggest challenge at scale is a slow, monolithic Terraform state file. Split your state into smaller, more manageable units. Common strategies include splitting by team, by service, or by Datadog resource type. This dramatically improves performance and reduces the blast radius of any single change.

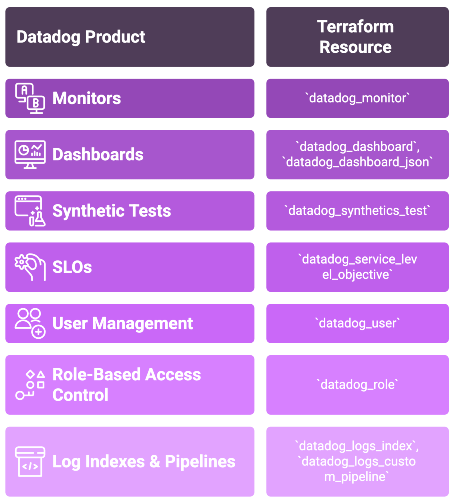

Datadog Product to Terraform Resource Mapping

The following table serves as a quick reference for mapping common Datadog products to their corresponding Terraform resource types.

| Datadog Product | Primary Terraform Resource |

|---|---|

| Monitors | datadog_monitor |

| Dashboards | datadog_dashboard, datadog_dashboard_json |

| Synthetic Tests | datadog_synthetics_test |

| Service Level Objectives (SLOs) | datadog_service_level_objective |

| User Management | datadog_user |

| Role-Based Access Control | datadog_role |

| Log Indexes & Pipelines | datadog_logs_index, datadog_logs_custom_pipeline |

Key Sources

Terraform Datadog Provider Documentation: registry.terraform.io/providers/DataDog/datadog/latest/docs

Datadog API Documentation: docs.datadoghq.com/api/latest/

Terraform Documentation: developer.hashicorp.com/terraform/docs

Terraform Registry (Datadog Modules): registry.terraform.io/search/modules?q=datadog

Datadog Provider GitHub Repository: github.com/DataDog/terraform-provider-datadog